Brick them all 🧱

And none of us will be allowed to have them

Only datacenters and only fortune 500 companies will be able to use anything Nvidia

But can it run Crysis?

Can it run Doom?

Jesus fucking Christ, 288GB. And this is why I can’t have 16?

so that’s why my 5070 laptop has 8 GBs of VRAM…

my old 1080 also had 8 GBs of VRAM

Goodbye, sweet hardware. You deserved better and so did we.

This is what all the parts we wanted went to

Don’t worry, you can rent them for $30 a month and stream all your video games.

Not even, just the ones they deign to allow

Yeah, I wonder how long it will take them to clue in that no one wants to trade gaming for an AI fucking girlfriend ffs…

I mean if they came with a cool android body we could talk about it. It should at least be able to do cleaning and cooking. Otherwise my wife won’t like it.

Until the money stops pouring in I suppose

Idk… I’m a tad excited to buy one for peanuts on eBay in a couple years for a local smart home upgrade. Heck, when the bubble pops, maybe they can sell power from all those generators back to the city and lower our utility bills too. /S

They might be useful for rendering, and I’d love to see how smoothly Teardown Game could run with all those cores.

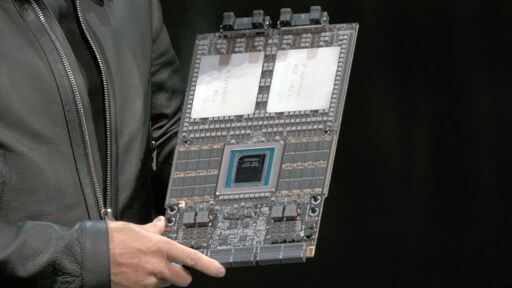

Nvidia’s Vera Rubin platform is the company’s next-generation architecture for AI data centers that includes an 88-core Vera CPU, Rubin GPU with 288 GB HBM4 memory, Rubin CPX GPU with 128 GB of GDDR7, NVLink 6.0 switch ASIC for scale-up rack-scale connectivity, BlueField-4 DPU with integrated SSD to store key-value cache, Spectrum-6 Photonics Ethernet, and Quantum-CX9 1.6 Tb/s Photonics InfiniBand NICs, as well as Spectrum-X Photonics Ethernet and Quantum-CX9 Photonics InfiniBand switching silicon for scale-out connectivity.

The buzzwords make my head hurt. Sounds like a copypasta

288 GB HBM4 memory

jfc…

Looking at the spec’s… fucking hell these things probably cost over 100k.

I wonder if we’ll see a generational performance leap with LLM’s scaling to this much memory.

Current models are speculated at 700 billion parameters plus. At 32 bit precision (half float), that’s 2.8TB of RAM per model, or about 10 of these units. There are ways to lower it, but if you’re trying to run full precision (say for training) you’d use over 2x this, something like maybe 4x depending on how you store gradients and updates, and then running full precision I’d reckon at 32bit probably. Possible I suppose they train at 32bit but I’d be kind of surprised.

Edit: Also, they don’t release it anymore but some folks think newer models are like 1.5 trillion parameters. So figure around 2-3x that number above for newer models. The only real strategy for these guys is bigger. I think it’s dumb, and the returns are diminishing rapidly, but you got to sell the investors. If reciting nearly whole works verbatim is easy now, it’s going to be exact if they keep going. They’ll approach parameter spaces that can just straight up save things into their parameter spaces.

Sure, but giant context models are still more prone to hallucination and reinforcing confidence loops where they keep spitting out the same wrong result a different way.

Sorry, I’m not saying that’s a good thing. It’s not just the context that’s expanding, but the parameter of the base model. I’m saying at some point you just have saved a compressed version of the majority of the content (we’re already kind of there) and you’d be able to decompress it even more losslessly. This doesn’t make it more useful for anything other than recreating copyrighted works.

Ah I see, however you do bring up another point. I really think we need a true collection of experts able to communicate without the need for natural language and then a “translation” layer to output natural language or images to the user. The larger parameters would allow the injection of experts into the pipeline.

Thanks for the clarification, and also for the idea. I think one thing we can all agree on is that the field is expanding faster than any billionaire or company understands.

Fundamentally no, linear progress requires exponential resources. The below article is about AGI but transformer based models will not benefit from just more grunt. We’re at the software stage of the problem now. But that doesn’t sign fat checks, so the big companies are incentivized to print money by developing more hardware.

https://timdettmers.com/2025/12/10/why-agi-will-not-happen/

Also the industry is running out of training data

https://arxiv.org/html/2602.21462v1

What we need are more efficient models, and better harnessing. Or a different approach, reinforced learning applied to RNNs that use transformers has been showing promise.

Yeah I’ve read that before. I don’t necessarily agree with their framework. And even working within their framework, this article is about a challenge to their third bullet.

I’m just not quite ready to rule out the idea that if you can scale single models above a certain boundary, you’ll get a fundamentally different/ novel behavior. This is consistent with other networked systems, and somewhat consistent with the original performance leaps we saw (the ones I think really matter are ones from 2019-2023, its really plateaued since and is mostly engineering tittering at the edges). It genuinely could be that 8 in a MoE configuration with single models maxing out each one could actually show a very different level of performance. We just don’t know because we just can’t test that with the current generation of hardware.

Its possible there really is something “just around the corner”; possible and unlikely.

What we need are more efficient models, and better harnessing. Or a different approach, reinforced learning applied to RNNs that use transformers has been showing promise.

Could be. I’m not sure tittering at the edges is going to get us anywhere, and I think I would agree with just… the energy density argument coming out of the dettmers blog. Relative to intelligent systems, the power to compute performance (if you want to frame it like that) is trash. You just can’t get there in computation systems like we all currently use.

LLMs can already use way more I believe, they don’t really run them on a single one of these things.

The HBM4 would likely be great for speed though.

Yeah they’re going to cost as much as a house.

I think we’ll see much larger active portions of larger MOEs, and larger context windows, which would be useful.

The non LLM models I run would benefit a lot from this, but I don’t know of I’ll ever be able to justify the cost of how much they’ll be.

Can’t wait for it to hit secondhand market in november

So we can do what? De solder the individual ram chips and populate them on custom dimms?

Pass.

It’s too late for all of these rack mounted, AI oriented products, those resources are already spent, they’re gone for us.

It’s like they took the world’s supply of high tech computer components and locked them in a room with a sign over the door that says “douchebags only”. And even if you got into that room, those components are only compatible with douchebag OS, so even if you completely cleared that room of douchebags, you still have to throw all their useless party favors in the dumpster.

Bringus is gonna make a weird gaming computer by shoving one into a movie rental kiosk.

who. fucking. cares.

You’re in a community called “Technology” and it’s got a bunch of upvotes, so us, presumably.

The news is important, but when it comes to user-end AI in general, big fucking meh.

Question is, how long before it makes it to the next DGX Spark? Some people don’t have $10B to burn.